I’ve been noticing something for a while. Fear-based security presentations change one thing reliably — the budget.

Everything else stays exactly the same.

I’ve seen it in boardrooms where the threat landscape briefing produced budget approval and cultural indifference. I’ve seen it in security awareness sessions where the phishing video produced genuine shock and identical click rates six months later. And I saw it most clearly recently watching a vendor present to a CISO.

The vendor was good. The content was compelling. The threat data was current, specific, and genuinely alarming. The CISO leaned forward. Asked sharp questions. Left the room visibly concerned.

Three months later the organisation hadn’t changed its programme. The budget hadn’t moved. The behaviour metrics were identical.

The vendor had done everything right. The fear had worked perfectly.

For about three months.

Then it faded. The concern dissipated. The urgency passed. And the behaviour — the actual day-to-day decisions of the people in that organisation that determine whether an attack succeeds or fails — remained exactly what it had been before.

That’s not a failure of the vendor. It’s not a failure of the CISO. It’s a failure of the model. And the research that proves it wrong has been sitting in academic journals since 1977.

This blog focuses on one of three critical conversations I wrote about last week that determine whether cyber becomes genuinely embedded in your organisation’s culture. The employee conversation is the most scientifically rich — and the one most consistently got wrong.

The Science Has Known This For Fifty Years

In 1977 psychologist Daryl Bem published his self-perception theory — the finding that people don’t just behave according to who they are. They become who they are through how they behave. Identity and behaviour are not fixed and sequential. They’re circular and mutually reinforcing.

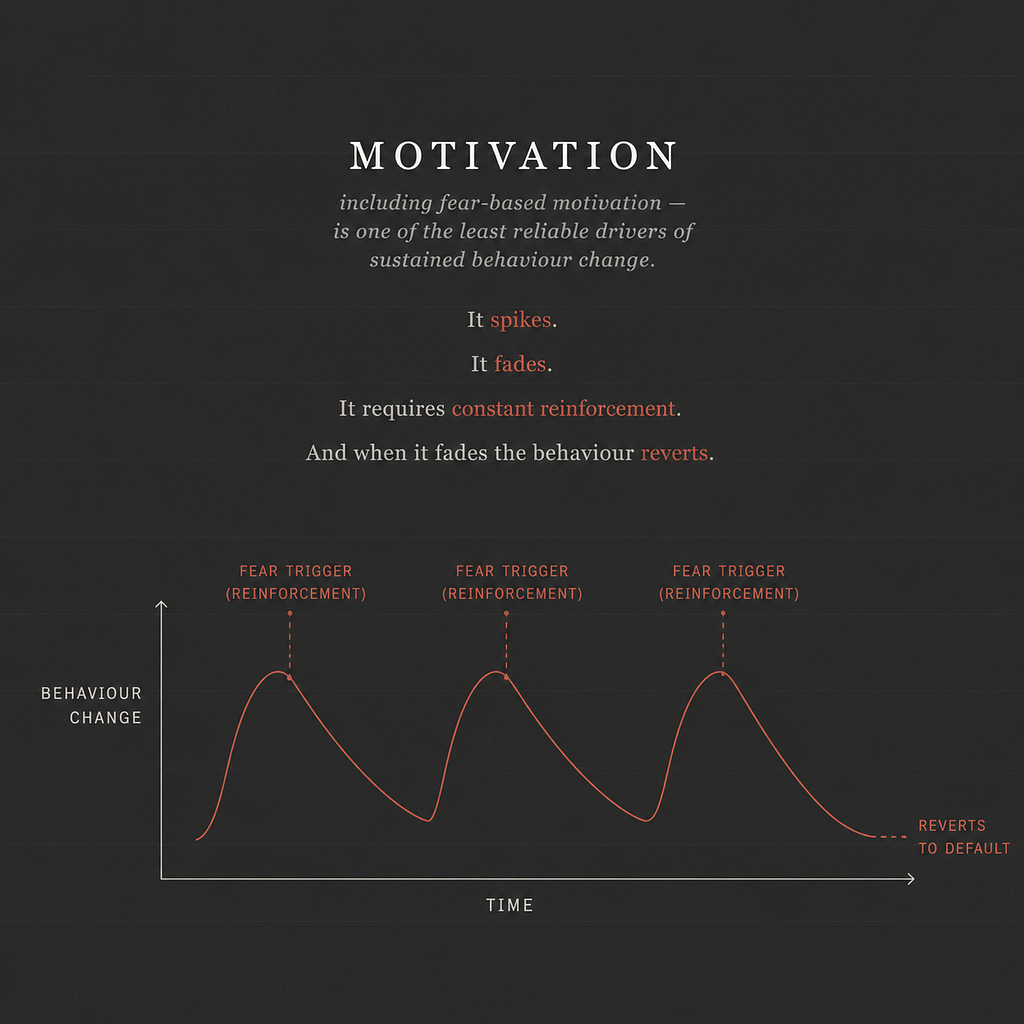

BJ Fogg at Stanford spent 20-years studying behaviour change and reached a conclusion that should make every security awareness professional uncomfortable. Motivation — including fear-based motivation — is one of the least reliable drivers of sustained behaviour change. It spikes. It fades. It requires constant reinforcement. And when it fades the behaviour reverts.

Dan Ariely’s research on identity and decision-making showed something even more specific. When people are primed to think of themselves in a particular role — as a protector, as someone responsible for others, as a person of integrity — their subsequent decisions consistently reflect that identity. The identity doesn’t follow the behaviour. The behaviour follows the identity.

Richard Thaler and Cass Sunstein’s work on nudge theory and choice architecture showed that behaviour change doesn’t always require motivation or conscious decision-making. By designing the environment so that the desired behaviour is the path of least resistance — and by framing that behaviour as what someone like you naturally does — nudges produce consistent, low-effort behaviour change precisely in the high-pressure, low-attention moments where motivation-based approaches fail.

James Clear synthesised much of this research accessibly in Atomic Habits. The most durable behaviour change, he argues, comes not from outcome-based goals — I want to stop clicking phishing links — but from identity-based ones. I am someone who protects this organisation. The behaviour follows the identity because every action becomes a vote for the kind of person you believe yourself to be.

None of this research was conducted in a cybersecurity context. All of it applies directly to why security awareness fails — and how to fix it.

The Model That Isn’t Working & Why

The standard cybersecurity awareness model runs something like this.

Show employees the threat. Demonstrate the consequences. Run a phishing simulation. Catch the people who fail. Remediate. Repeat.

This model treats behaviour change as a compliance problem. The assumption is that if the threat is visible enough, and the consequences are clear enough, rational actors will change their behaviour to avoid the bad outcome.

But employees are not primarily rational actors making calculated risk decisions. They are people with identities, with social contexts, with competing pressures, and with a fundamentally human tendency to behave consistently with how they see themselves.

And how does the standard security awareness model ask employees to see themselves? As potential victims. As people who might make a mistake. As the weakest link!

That framing is not only scientifically unsound. It’s actively counterproductive. Employees who see themselves as potential victims don’t become more vigilant. They become more anxious. Anxiety produces avoidance not engagement. Employees who are frightened of making a mistake don’t report phishing attempts — they hide them. They don’t raise concerns — they hope nothing goes wrong. They don’t ask questions — they comply minimally and privately pray the test doesn’t catch them.

That is fear-based compliance. And fear-based compliance is the most fragile security control in existence — because the moment the fear fades, which it always does, the behaviour reverts.

The Faster, More Durable Alternative

The research points consistently toward a different model. One built not on fear but on identity.

Social identity theory — developed by Henri Tajfel and John Turner in the 1970s and 1980s — shows that people derive significant parts of their self-concept from the groups they belong to and the roles they occupy within those groups. When membership of a group is psychologically salient — when someone is actively thinking of themselves as part of that group — their behaviour consistently aligns with the norms and values of that group.

Applied to security awareness this means the most powerful question isn’t “do you understand the threat” — it’s “who do you see yourself as in this organisation?”

If an employee sees themselves as someone whose actions protect their colleagues, their customers, and their organisation — and if that identity is made salient before the security behaviour is requested — the research strongly predicts they will behave consistently with that identity.

Not because they’re frightened. Because it’s who they are.

Fogg’s research shows consistently that behaviour attached to existing identity requires significantly less motivation to sustain than behaviour driven by fear or external incentive. Clear’s synthesis shows that identity-based habits are more resistant to disruption under pressure — precisely the condition that matters most in security, when someone receives a suspicious email under time pressure with competing demands on their attention.

The employee who has been told repeatedly that they are a potential victim will, under pressure, revert to avoidance. The employee who genuinely sees themselves as a protector will, under pressure, pause and question. That pause is worth more than any phishing simulation ever run.

From Theory To Programme Design

The shift from fear-based to identity-based security awareness isn’t a complete redesign of every programme. It’s a reframing of how every element of the programme speaks to the person receiving it.

It starts with the opening of every security conversation — not “here is the threat you need to avoid” but “here is the role you play in keeping this organisation safe.” Not “you are the weakest link” but “you are the most important layer of defence we have.”

It continues through role-based training — moving away from the generic phishing module that goes to everyone from the receptionist to the CFO, toward content that speaks specifically to who someone is and what they do. The accountant who receives training about invoice fraud in financial systems isn’t just receiving relevant content. They’re being told, “you specifically, in your specific role, are someone whose decisions matter in this specific way.” That’s identity activation built into programme design.

It extends into nudges — the real-time behavioural architecture that Thaler and Sunstein’s work shows is more effective than motivation-based interventions at changing behaviour in the moment. The best security nudges don’t warn or frighten. They activate identity precisely when it matters. A just-in-time prompt that asks “does this look right to you?” before someone clicks a suspicious link does something a training session from three months ago cannot — it engages the protector identity at the moment of decision rather than relying on fear-based motivation that has long since faded.

And it shows up in how security leaders talk about incidents and near-misses. Not “someone failed the phishing simulation” but “someone caught something suspicious and reported it — exactly what we need.” The behaviours that get celebrated shape the identity that gets reinforced.

Scaling Identity Across The Organisation

The most powerful expression of identity-based behaviour change at organisational scale is the security ambassador programme — and it is one of the most underused tools available to security leaders.

When you identify individuals across the organisation, give them the specific role of security champion or ambassador, and make that identity named, recognised, and socially visible — you are doing something the science strongly predicts will work. You are not just training someone. You are giving them a role. A group membership. A professional identity that Tajfel and Turner’s social identity theory shows will drive consistent behaviour far more reliably than any compliance-based intervention.

This model isn’t new and it isn’t experimental. Community health worker programmes have used it for decades to drive sustained behaviour change in HIV prevention and vaccination — consistently outperforming information campaigns precisely because the messenger shares the identity of the people they’re working with. Workplace safety champion programmes — the model behind the Alcoa safety transformation that became a benchmark for culture change in high-risk environments — used the same mechanism to make safety a professional identity rather than a compliance requirement. And in financial services specifically, vulnerable customer champion programmes embedded in customer-facing teams are demonstrating exactly what the FCA’s own research predicted — more consistent and more sustained behaviour change than policy and training alone can achieve.

The mechanism in every case is identical. The ambassador isn’t just a messenger. They are a living embodiment of the desired behaviour — someone whose visible identity makes that behaviour normal, expected, and socially reinforced within a specific team context.

The network effect extends that impact further. Security ambassadors don’t just change their own behaviour. They create social proof within their teams — they normalise security-conscious behaviour, surface concerns in ways that feel peer-to-peer rather than top-down, and make it psychologically safe for others to raise questions because there is someone nearby whose role makes those conversations natural. They are, in nudge theory terms, a standing contextual signal that security-conscious behaviour is what people here do — not what the security team asks us to do.

There is also a bidirectional effect worth naming. The most consistent finding across ambassador programmes in every sector is that the ambassadors themselves show the strongest behaviour change of anyone in the programme. Taking on the identity of ambassador reinforces and deepens the behaviour in the person carrying that identity — which means the programme’s most committed advocates are also its most durable practitioners.

The most advanced organisations are now bringing all of this together under what is increasingly referred to as human risk management or security behaviour management— moving beyond point-in-time training toward continuous behavioural monitoring, personalised interventions, real-time nudges, and human ambassador networks that treat the human layer not as a compliance problem to be periodically reminded but as a dynamic risk domain to be continuously understood and actively shaped.

The science behind identity theory, nudge theory, and self-perception research is the intellectual foundation of that discipline. The organisations building genuine human risk management capability are, whether they name it this way or not, doing identity-based behaviour change at scale — through their programmes, their nudges, and the people they have empowered to carry the protector identity into every corner of the organisation.

Psychological safety remains the hard infrastructure that underpins all of it. Employees whose identity includes being a protector will only act on that identity if the culture makes it safe to do so. If raising a concern leads to embarrassment, if reporting a mistake leads to blame, if pausing to question leads to friction — the identity is activated but the behaviour is suppressed.

Psychological safety isn’t the soft layer of the security culture conversation. It’s the hard infrastructure that allows identity-based behaviour to actually manifest in the moments that matter.

The Uncomfortable Conclusion

The security awareness industry has spent billions of pounds and dollars on a model that the behavioural science has been questioning for 50-years. The good news is that the most sophisticated organisations are finding their way toward a better one — through role-based training, through nudges, through security ambassador programmes, and through the emerging discipline of human risk management.

But most organisations are still at the beginning of that journey. Still running the same generic awareness programme. Still measuring completion rates rather than behaviour change. Still treating the human layer as a compliance problem rather than a risk domain.

Not because the people designing these programmes are wrong about the threats. Because they’ve been applying the wrong model of how human beings actually change.

The fastest way to change security behaviour isn’t to frighten people into acting differently. It’s to help them see themselves as someone who acts differently.

Identity first. Risk second. Possibility to close.

That sequence — and the science behind why it works — is something every security leader should understand before they design their next awareness programme, their next all-hands session, or their next conversation with an employee about why cyber matters.

Now I Want To Hear From You…

The security awareness industry has been measuring the wrong things for 50-years — completion rates instead of behaviour change, training attendance instead of incident reporting rates, phishing click rates instead of psychological safety scores.

What would you measure instead and what would change in your programme if you did? Tell me in the comments over on LinkedIn where we’re having this conversation.